Psychopy

| |||||||||

| Development status | Active | ||||||||

|---|---|---|---|---|---|---|---|---|---|

| Written in | Python | ||||||||

| |||||||||

PsychoPy is an alternative to Presentation, e-Prime and Inquisit. It is a Python library and application that allows presentation of stimuli and collection of data for a wide range of neuroscience, psychology and psychophysics experiments. When used on DCC computers PsychoPy is guaranteed to be millisecond accurate.

Installation

We recommend to use a modern 64-bit version of Python. In our labs, we currently have Python 3.7.6 64 bits installed. You can download it here: Python3.7.6 64 bits (Windows) or choose here: Please choose a 64 bit version.. Run the installer and make sure to add Python to the file path (it's an option in the installer).

Download from here: PsychopyRequirements3.7.txt.Open a command prompt (with administrator rights), go to the folder where the downloaded file is and upgrade the pip installer by typing:

python -m pip install --upgrade pip

Then:

pip install -r PsychopyRequirements3.7.txt

This should install everything you need for a basic Psychopy installation. Since packages are updated once in a while, this PsychoPyDependenciesPython3.7.txt might get outdated at some time. Please let us know.

For Pavlovia users

If you want to upload experiments to Pavlovia, you will need to install Git-2.17.1-64-bit.exe using these instructions: GitInstall.docx. Then, in System| Advanced system settings | Environment variables, add the folder where git-daemon.exe is, to the PATH variable. Usually, that folder is named: 'C:\Program Files\Git\mingw64\libexec\git-core'.

Upgrade from Python 2.7

Since Python version 2.7 has reached end of life since January first 2020, this version is no longer installed on the PCs in the labs. Standard is now: Python 3.7 64-bits. If you still have scripts written in Python2, the scripts should be upgraded to Python 3. Most changes are probably the print statements. Print statements should always have parentheses: print('some text') Key differences between Python 2 and Python 3 are here: https://sebastianraschka.com/Articles/2014_python_2_3_key_diff.html

Psychopy 2020.2.10 has been installed in the root of the Python3.7 64-bit version. This is also the default version when 'psychopy' is typed from the command prompt. It is also the default that opens when a .py file is double-clicked. It also can be started by clicking the appropriate icon on the desktop. There is also a Psychopy 2020.2.10 installed on Python3.6 32-bits. This version has its own icon on the desktop and should be used when you are using a TOBII Eyetracker.

When your script fails to load in Psychopy, because you need packages that are not installed on our labcomputers, please contact TSG.

On the labcomputer, there is support for Spyder, PyCharm and Psychopy.

For Tobii users

Since Tobii does not support 64-bits and does not support Python higher than version 3.6.8, there is a 32 bits Python version 3.6.8 installed. If you want to use a Tobii Eyetracker and want to install Python and Psychopy yourself, download Python here: Python3.6.8 (Windows) or here: List of downloads for Python3.6.8. Please choose a 32-bit version. Run the installer, choose to add python to the file path (it's an option in the installer).

Download from here: PsychoPyDependenciesPython3.6.8.txt. Open a command prompt (with administrator rights), go to the folder where the downloaded file is and upgrade the pip installer by typing:

python -m pip install --upgrade pip

Then:

pip install -r PsychopyRequirements3.6.8.txt

Note: in our labs, the 64-bits Python and 64-bits Psychopy are installed as default. The 32-bits Python 3.6.8 and 32-bits Psychopy are installed in a separate virtualenv. If you want to use Python3.6.8 from the command prompt, you first have to type:

workon Python36

After that, everything should work from Python3.6.8, including the 32-bits packages.

For SR-Research Eyelink users

Eyelink users should follow the regular instructions above. Additionally, download the SR-Research Python package from here: pylinkForPython-3.7.5-x64-Win. Unzip it, find the folder called: pylink and copy it to your Pythons lib/site-packages folder.

For SMI RED 500 and SMI HiSpeed Tower users

Include this file into your project: iViewXudp. This should work on both 64-bit and 32-bit Python 3 versions.

Usage

VirtualEnv

Some of the packages that are installed in the steps above, make it possible to make use of VirtualEnvs. A virtual environment is a Python environment such that the Python interpreter, libraries and scripts installed into it are isolated from those installed in other virtual environments, and (by default) any libraries installed in a “system” Python, i.e., one which is installed as part of your operating system.

If you want to use virtualenvs on your on computer, In System| Advanced system settings | Environment variables, make a new system variable with name WORKON_HOME and value C:\Users\Public\Envs\. And make a new system variable with name: PROJECT_HOME and value C:\Users\Public\Projects. Your virtualenvs will now be stored in C:\Users\Public\Projects and projects in C:\Users\Public\Projects. These are also the places where virtualenvs and projects are stored on the labcomputer.

Open a command window with administrator rights and type:

workon to see a list of existing virtualenvs.

workon <virtualenvname>, where <virtualenvname> is the name of the virtualenv you want to use.

mkvirtualenv <virtualenvname> to create a new and empty virtualenv.

mkvirtualenv -p=37 <virtualenvname> to create a new and empty virtualenv that uses the installed Python3.7.6.

mkvirtualenv -p=36 <virtualenvname> to create a new and empty virtualenv that uses the installed Python3.6.8.

mkvirtualenv -p=37 --system-site-packages <virtualenvname> to create a new virtualenv that uses the installed Python3.7 and its site-packages.

rmvirtualenv <virtualenvname> to remove the virtualenv with name <virtualenvname>.

deactivate to return to the defaults.

In our labs, we have th evirtualenvs:

Python36 that contains Python 3.6.8 32-bits, Psychopy and the tobii_research package.

Python37 that contains Python 3.7 64-bits, Psychopy and the pylink package (for use with the Eyelink eyetracker).

and some more virtualenvs that contain packages that would otherwise interfere with Psychopy.

If you need a package that is not installed on our labcomputer, contact TSG, so that we can decide to add it to our standard installation, or to install it in a separate virtualenv. Do not use pip install and install anything in an existing virtualenv. Unless it is your own virtualenv. This might interfere with existing packages and might mess up other peoples projects. Instead, make your own virtualenv and install it there (use mkvirtualenv to create it, use workon to activate it, then use pip to install packages into your own virtualenv). Also, make a backup of your virtualenv, since when the labimage is updated, the newly created virtualenvs will be gone.

Spyder

Spyder (Scientific Python Development Environment) is an IDE for Python. It can be run from a command prompt. If you want to use Spyder in the default Python 3.7.6 64-bits environment, you can just type Spyder from the command prompt. If you want to use Spyder from the Python36 virtualenv, type workon python36 and then type Spyder3. If you have created your own virtualenv, make sure that Spyder is installed.

PyCharm

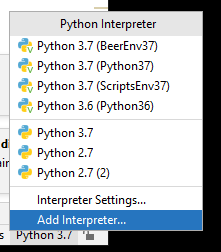

PyCharm is installed on our labcomputers. It is a Python IDE. In the lowerright corner, it will display its current Python environment. By clicking on that name, you can change the interpreter and choose from the existing virtualenvs that PyCharm knows. Or you can add your own virtualenv.

Batch files

If you are working from a virtualenv, other than the default, and you don't want to open a command window, type workon <virtualenv> and type python <myscript.py> every time, you might want to make a batch file. Create a text file, type:

call workon <virtualenv>

python <myscript.py>

Save the file into the folder where your script is, but change the extension from .txt to .bat, for example, save the file as startmyscript.bat.

32-bits Psychopy (Tobii users)

Our labcomputer has a special virtualenv that is called Python36. This virtualenv contains everything that Psychopy needs to install, and it contains the tobii-research package. It also contains the packages that are needed to use the cv.dll library.

To start your script you can:

- start Psychopy using the icon on the desktop that uses the 32-bit version.

- from a command window, type workon Python36, then type Psychopy to start the 32 bit Psychopy version.

- from a command window, type workon Python36, then type Spyder3 to start the Spyder IDE.

- choose the Python 3.6 (Python36) python interpreter in PyCharm.

- from a command window type workon Python36, then type python <myscript.py> to start your script. Or make a batch file that does this.